How to Turn Substack RSS Into Clean Text for Your AI Assistant

Why "clean data" is the foundation

My AI assistant answers questions using my Substack archive as the knowledge base.

Substack doesn’t provide a public API I can rely on, so the practical interface is the RSS feed.

Let’s dive into how this works and what RSS actually returns.

What feedparser returns

I use feedparser, a Python library that parses RSS/Atom feeds into a structured object.

For each entry, it exposes fields like:

titlelinkcontentorsummary(the body, when present)published(timestamp)

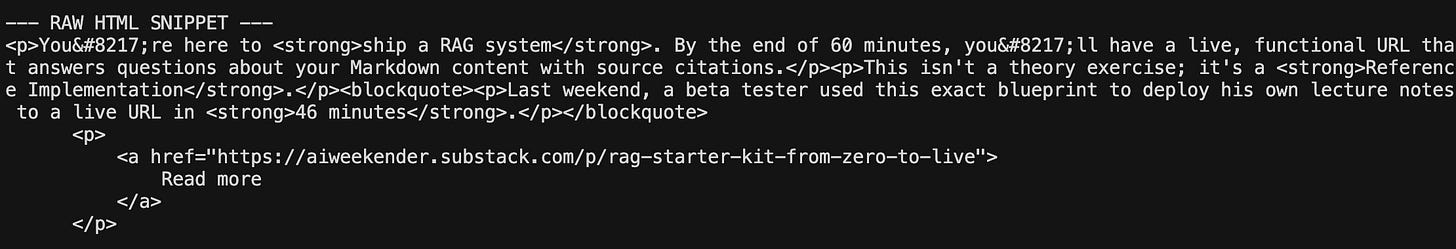

feedparser returns the feed payload as-is. If the feed contains HTML, you get HTML. If it contains truncated excerpts, you get excerpts. If URLs include tracking parameters, you get those too.

Here’s what a Substack raw RSS entry looks like:

If I indexed the raw version as is, my embeddings would include:

UI boilerplate (”Subscribe now”, “Share this post”)

HTML artifacts and spacing noise

truncation stubs that look like sentences but aren’t

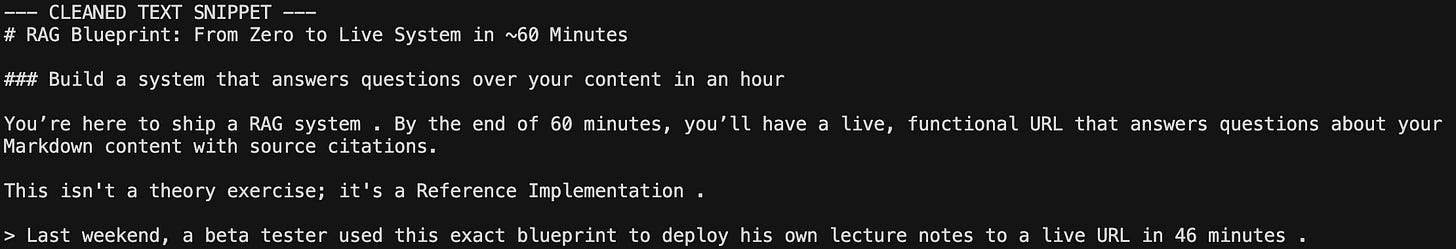

This means I have to clean the feed to include only the post itself. Here’s what I want to embed instead:

Why this matters: garbage in, garbage out

In traditional ML, data cleaning is a baseline requirement because bad inputs produce bad predictions.

In a RAG system, bad data creates bad neighborhoods.

When you ingest chunks with repetitive noise like “Subscribe now”, you’re essentially adding the same set of coordinates to every post. This acts like a mathematical anchor that could pull entirely different topics into the same cluster, depending on the proportion of boilerplate tokens as a percentage of chunk.

This is a problem of token density. If your chunk is 200 words and 50 of those are boilerplate, 25% of your vector’s “direction” is determined by noise.

Even if the actual content of two passages is entirely different, the high percentage of shared “noise” tokens makes them appear as nearest neighbors and dilutes the signal of actual content.

When the RAG system goes to find an answer, it doesn’t find the most relevant insight; it retrieves the most similar “noise.”

In short, if you ingest noise, you will retrieve noise.

The Cost of Messy Data

Every developer building with LLMs eventually realizes the importance of clean, reliable data. You can have the best model in the world, but if your RAG pipeline is ingesting “Subscribe Now” as core knowledge, your assistant will always feel slightly hallucinated.

Ingestion is where you define what “truth” looks like for your agent. If you skip the cleaning step, your system’s accuracy will suffer from the messy XML feed.

If you’re building something similar: what’s your source format (RSS, docs site, Notion, PDFs), and what ingestion artifact keeps tripping you up?

Senior Builders’ Vault: Substack-to-Markdown Implementation

Building a stable data pipeline is 20% architecture and 80% data plumbing. You can spend your weekend reverse-engineering Substack’s HTML artifacts, or you can use my script.

This is the exact Python implementation I use to turn my RSS stream into clean, structured .md files. It handles the boring edge cases for you: